Waveforms as an Operating System: A Signal-Channel Abstraction for AI-RAN

waveform design as a statistical abstraction layer for AI-RAN.

Abstract

Artificial intelligence-radio access network (AI-RAN) systems operating directly on in-phase/quadrature (I/Q) symbols are typically trained under fixed statistical assumptions, most notably under additive white Gaussian noise (AWGN). In practical deployments, however, wireless channels and radio frequency (RF) hardware exhibit diverse, environment-dependent distortion statistics that cannot be captured by a single training distribution. AI-generated symbols are also model-dependent and opaque, introducing uncertainty in RF front-end design. This article argues that AI-RAN requires an abstraction layer, analogous to an operating system in computing, that mediates between black-box AI models and heterogeneous wireless channels. We propose a waveform-level abstraction framework that statistically regularizes both signal and error characteristics at the AI-RF interface, making them more stable and predictable for both sides. The contribution is not a new waveform per se, but a new design paradigm for waveform construction in AI-native systems. This framework suggests a path toward train-once, deploy-broadly operation through a standardized statistical interface, supported by over-the-air experiments, and positions waveform design as an architectural tool for robust, portable, and scalable AI-native wireless systems.

Why This Matters

AI-native wireless systems are hard to scale because both sides of the interface are unstable. Learned models produce model-dependent symbol distributions, tails, and correlations. The physical link then adds residual channel, synchronization, equalization, and RF impairments that rarely match the training distribution.

The operating-system analogy turns waveform design into a systems concept. The waveform does not need to solve every task internally; it needs to create a predictable contract between AI workloads and the RF stack. That contract is what makes train-once, deploy-broadly operation plausible.

What This Paper Does

The paper defines two design goals for AI-RAN waveforms. The first is AI-facing invariance: the decoder should see an impairment law that changes less from deployment to deployment. The second is RF-facing regularity: the hardware should see sample statistics and peak behavior that are less model-dependent.

From this perspective, spreading, permutation, and phase dithering are not just signal-processing tricks. Spreading reshapes marginal statistics through mixing, permutation breaks harmful locality, and dithering weakens coherent structure before mixing. Together they implement an abstraction layer around the learned model without modifying the model itself.

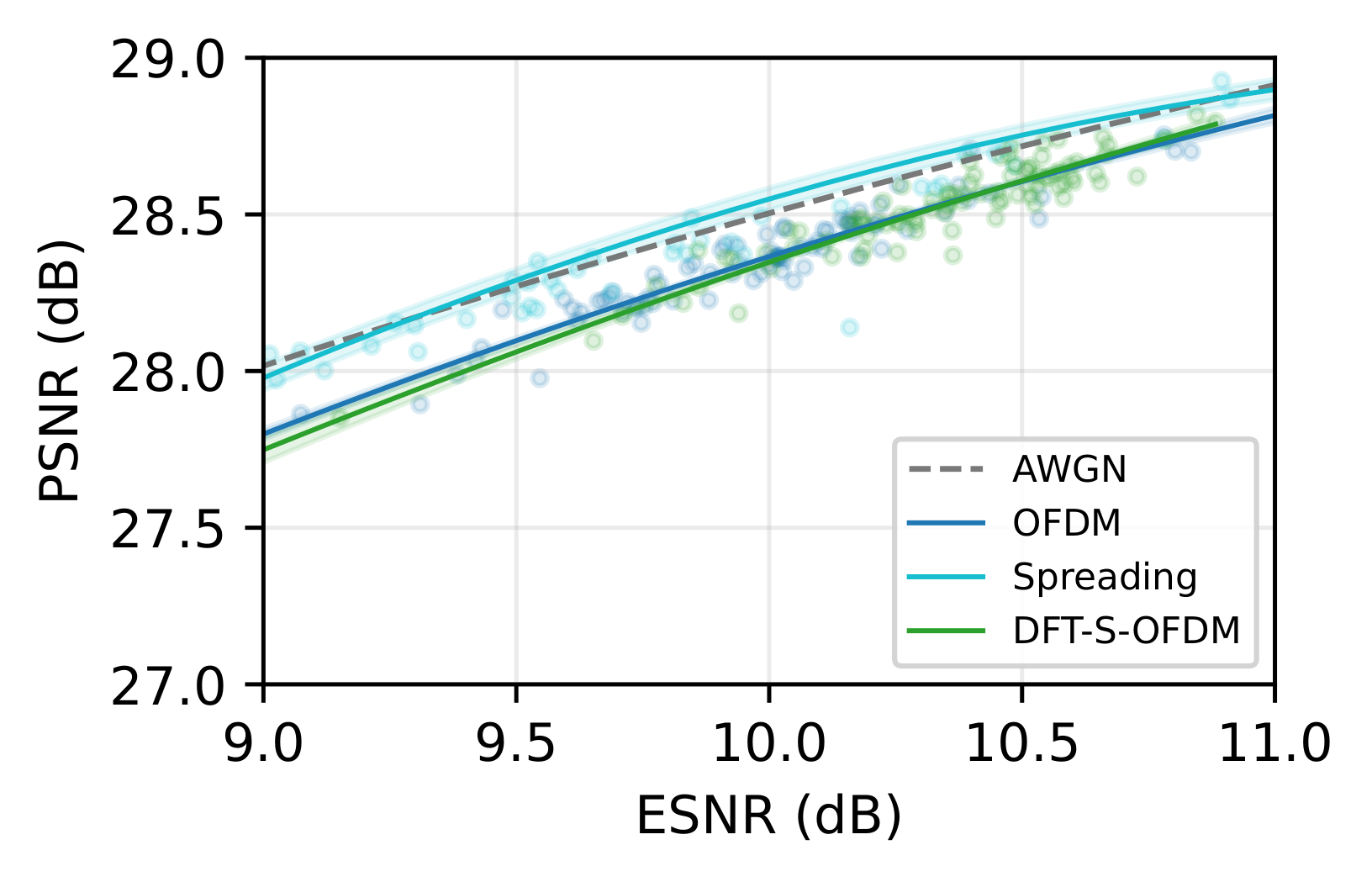

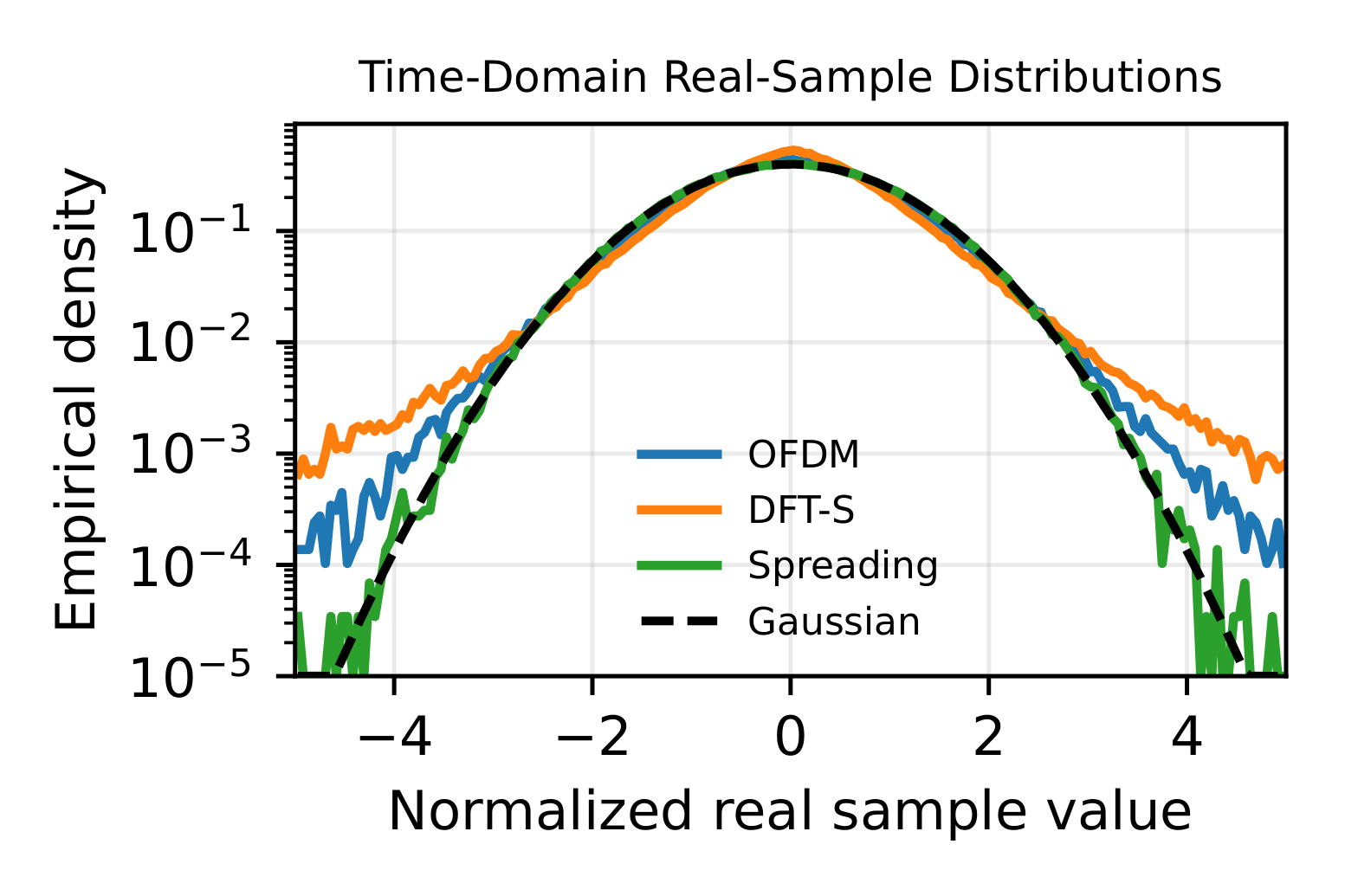

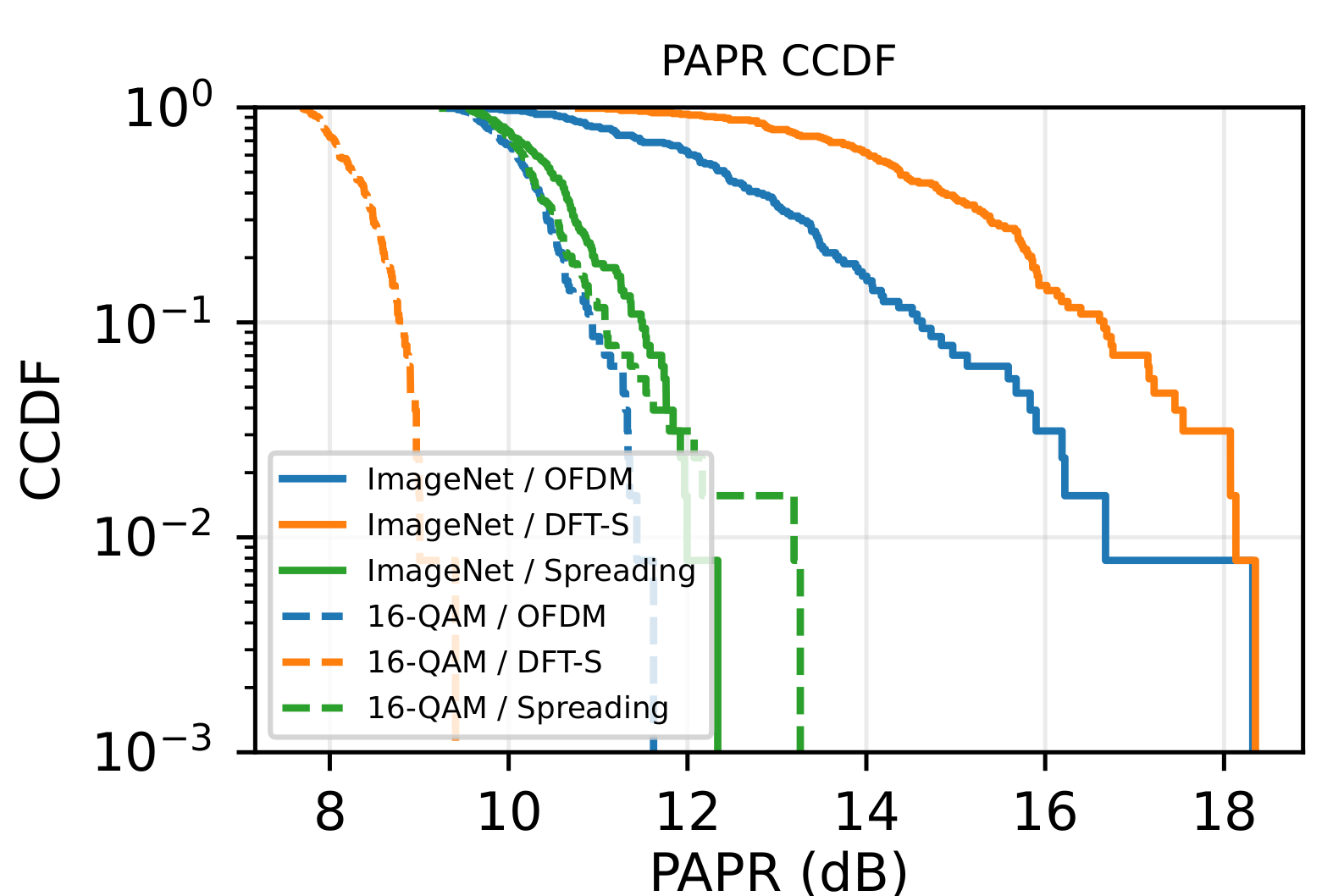

The experimental evidence is deliberately two-sided. On the AI-facing side, the prototype PSNR curve tests whether waveform choice improves end-task reconstruction under real residual errors. On the RF-facing side, the waveform marginal plot shows that the same semantic symbols induce different time-domain sample distributions depending on the waveform, which changes the statistics seen by the RF front-end.

Key Results

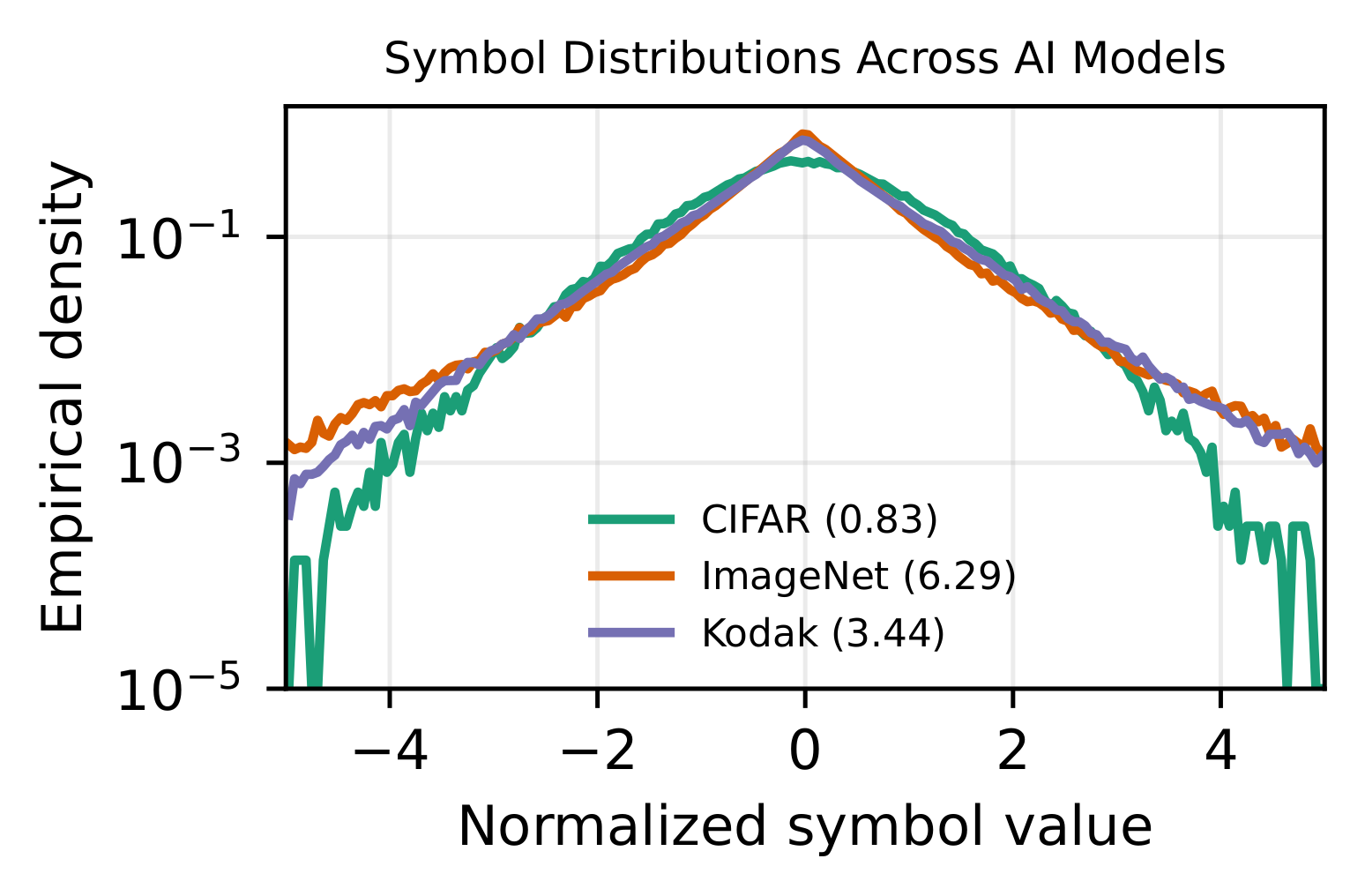

- AI-generated semantic symbols make waveform behavior depart from conventional fixed-constellation intuition.

- For heavy-tailed inputs, preserving symbol statistics directly can be worse for RF peaks than mixing them.

- The spreading waveform improves OTA semantic reconstruction compared with raw OFDM and DFT-S-OFDM in the reported prototype study.

- Waveform choice changes time-domain sample distributions and PAPR behavior, which matters for RF front-end design.

- The main takeaway is architectural: waveform design can be the statistical interface that makes AI-RAN models more portable.