Train Once, Deploy Broadly: Waveform Transformation for AWGN-Trained Semantic Communications

waveform transformation for robust semantic communications without retraining.

Abstract

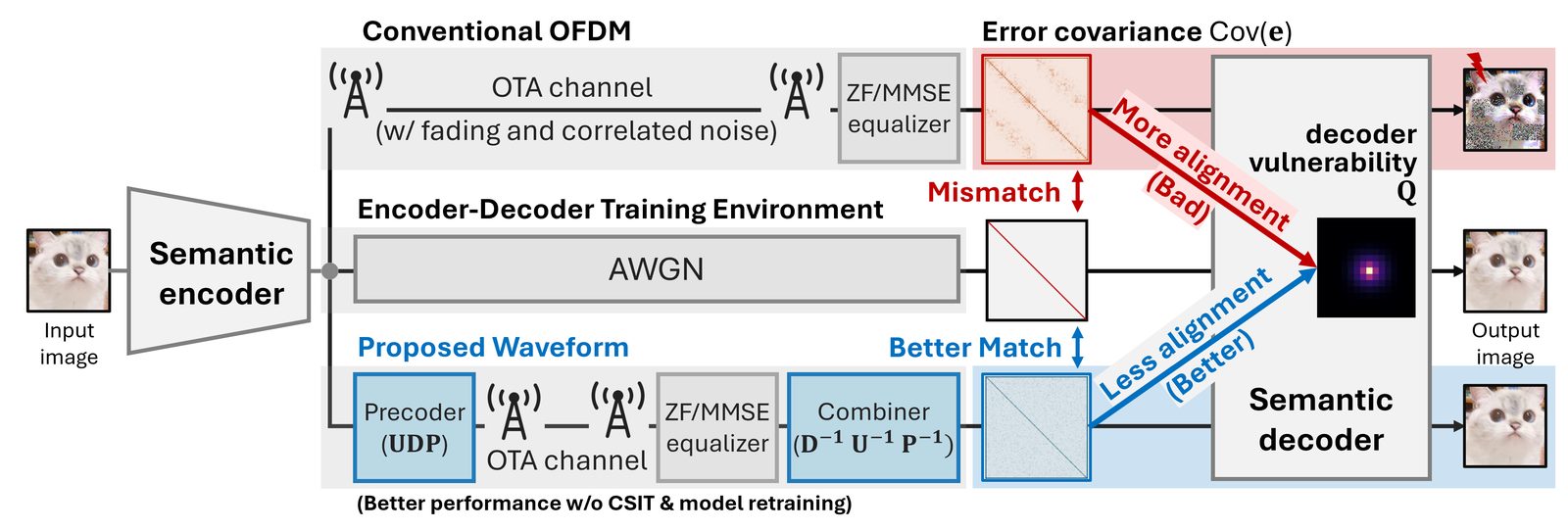

Deep joint source-channel coding (DeepJSCC)-based semantic communication systems are typically trained under additive white Gaussian noise (AWGN), whereas practical wireless links produce error statistics that differ significantly from this assumption. This mismatch fundamentally limits their deployability, particularly in orthogonal frequency division multiplexing (OFDM) systems where the decoder-input error remaining after channel estimation and equalization is structured, correlated, and non-Gaussian. In this work, we investigate a decoder-aware framework that characterizes this mismatch via a second-order vulnerability model and motivates adapting the waveform, rather than the model, to the deployment environment. We propose a fixed waveform-level processing framework based on unitary spreading, phase dithering, and symbol permutation, which reshapes error statistics to better match the training assumptions. The proposed design reduces error correlation, promotes Gaussian-like behavior, and improves reconstruction quality by up to 0.9 dB in peak signal-to-noise ratio (PSNR) over raw OFDM. More broadly, it illustrates how waveform design can serve as a statistical interface between learned representations and physical channels, enabling a training-deployment decoupling paradigm for robust semantic communications without retraining or transmitter-side channel state information.

Why This Matters

The obvious fix for deployment mismatch is retraining, but that scales poorly. Every deployment condition, hardware chain, or propagation environment then becomes a model-management problem. This paper reframes the issue as an interface problem: the learned decoder expects AWGN-like perturbations, while practical OFDM delivers structured residual errors.

That viewpoint separates learning from deployment. If waveform processing can stabilize the error statistics seen by the decoder, the semantic codec can remain fixed while the physical interface absorbs part of the deployment variability.

What This Paper Does

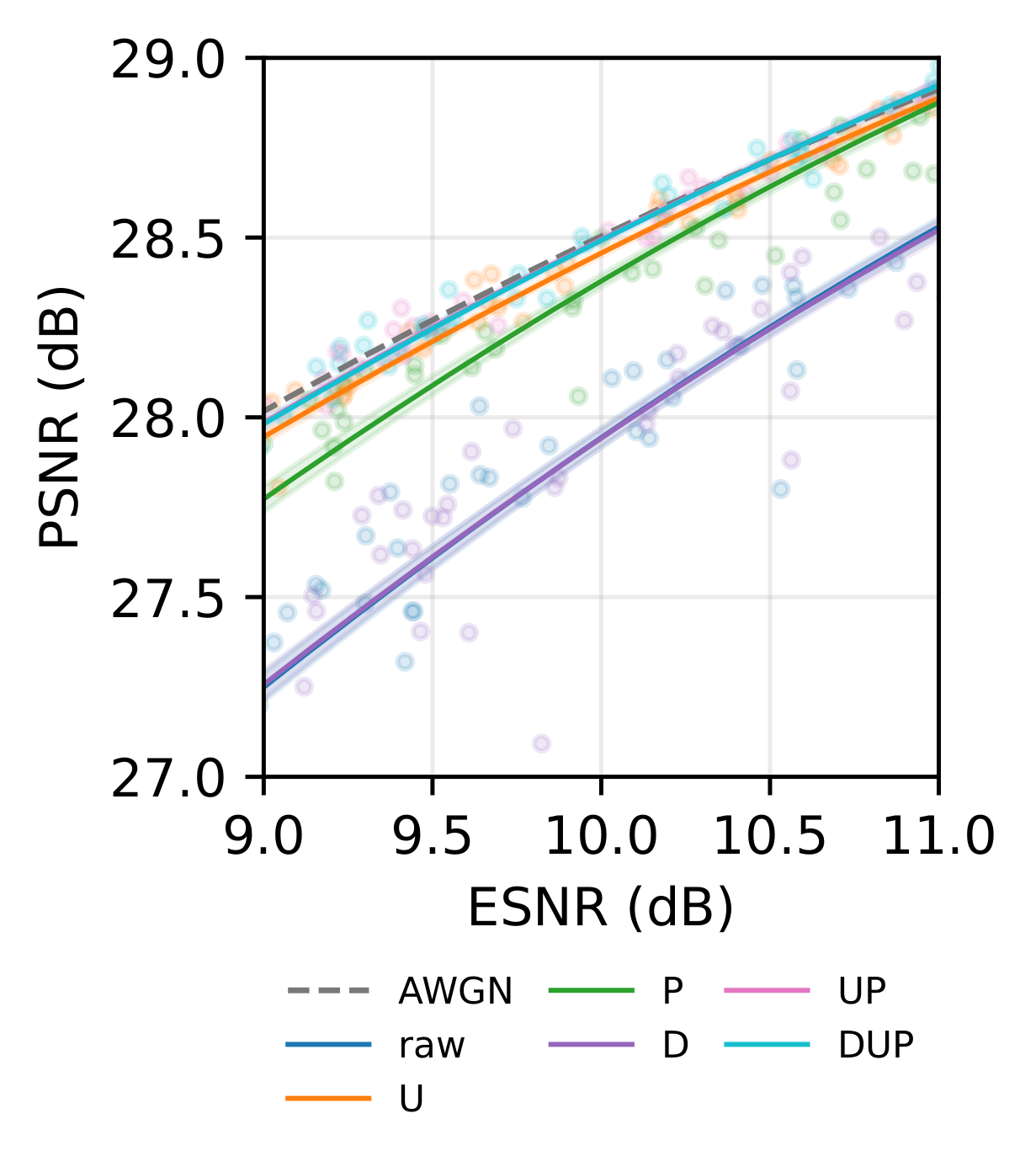

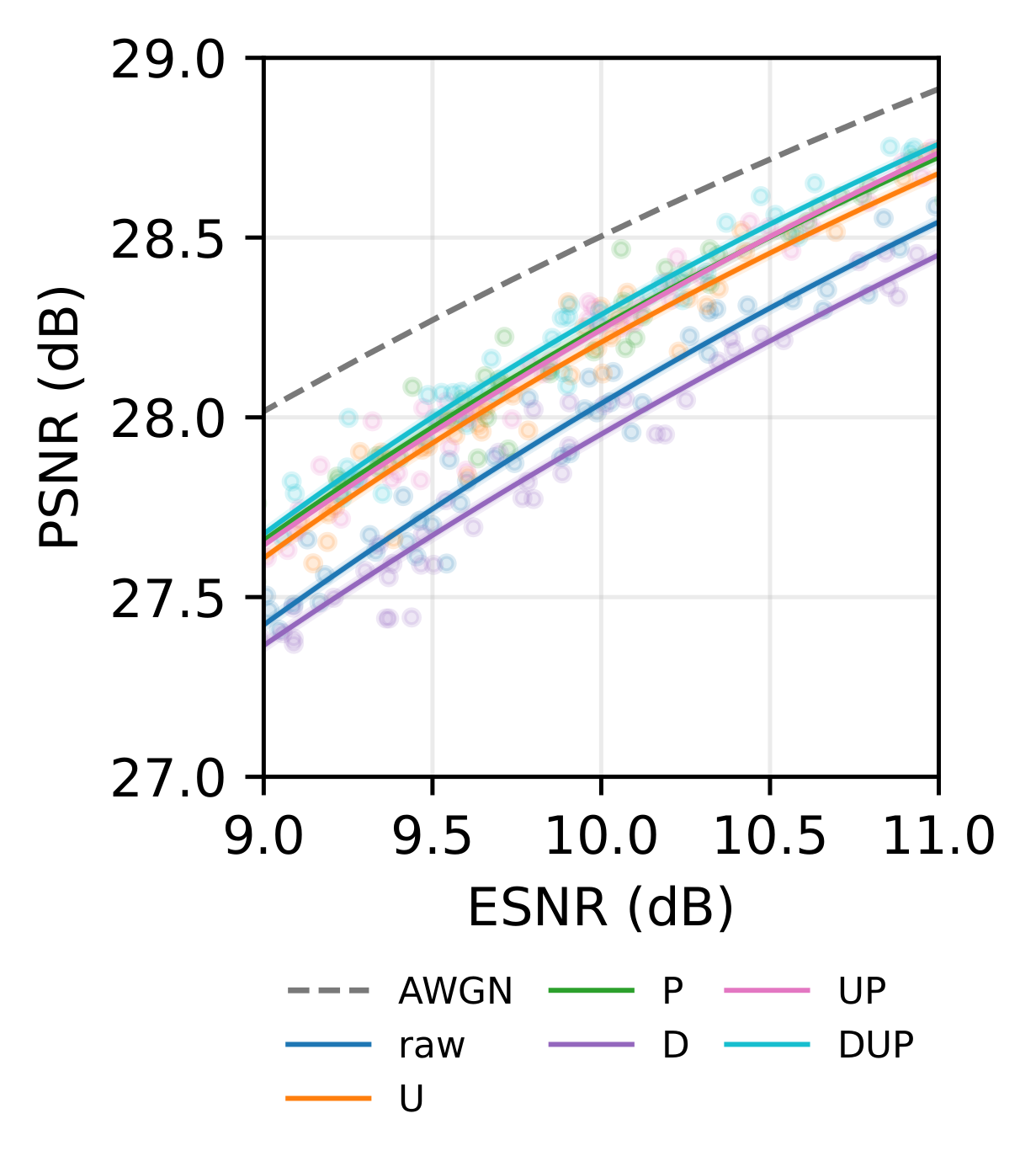

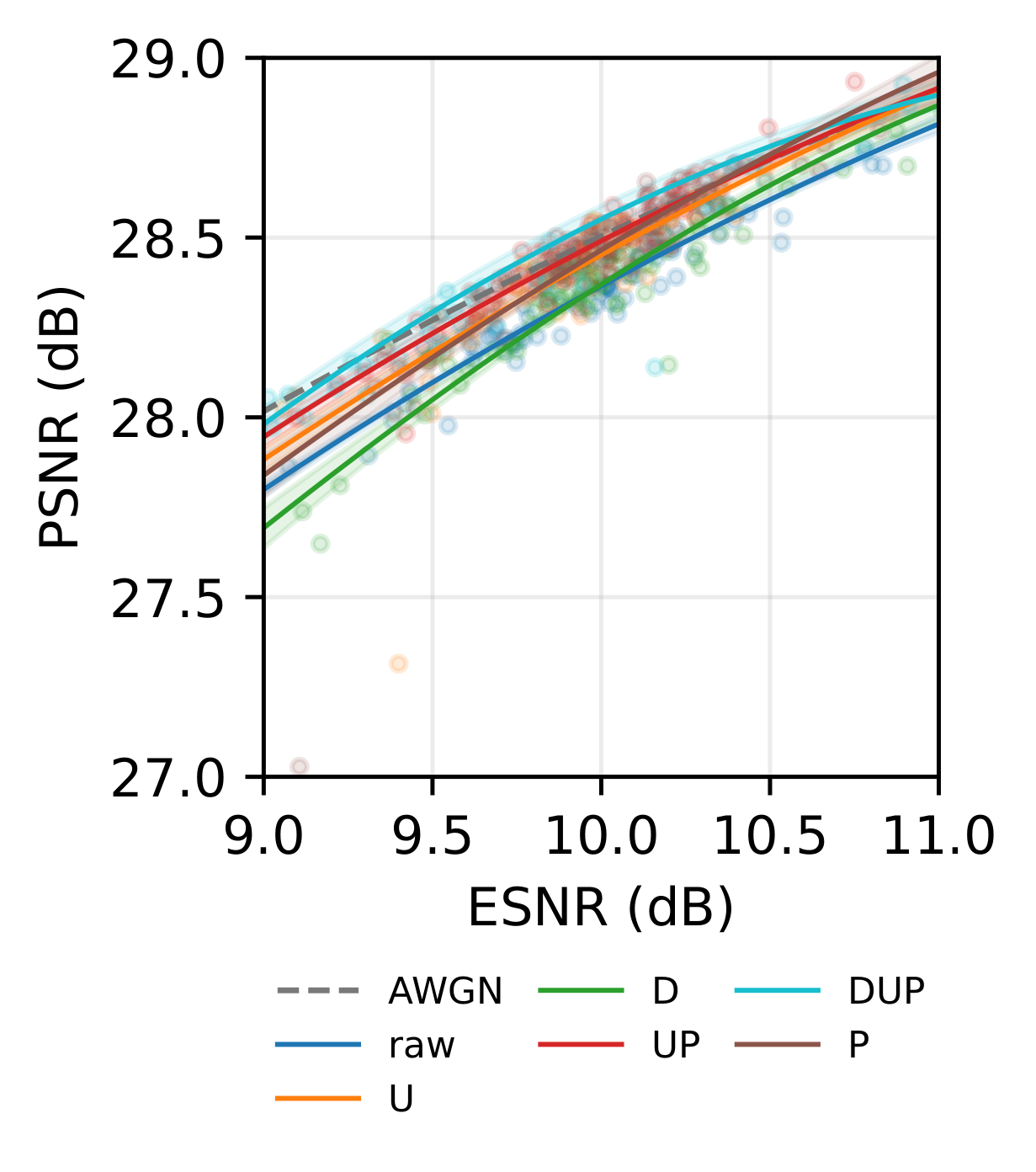

The paper introduces a decoder-centric vulnerability model. Rather than measuring only average error power, it studies whether the deployment error covariance aligns with directions that strongly affect reconstruction loss. This explains why errors with the same ESNR can produce different semantic quality.

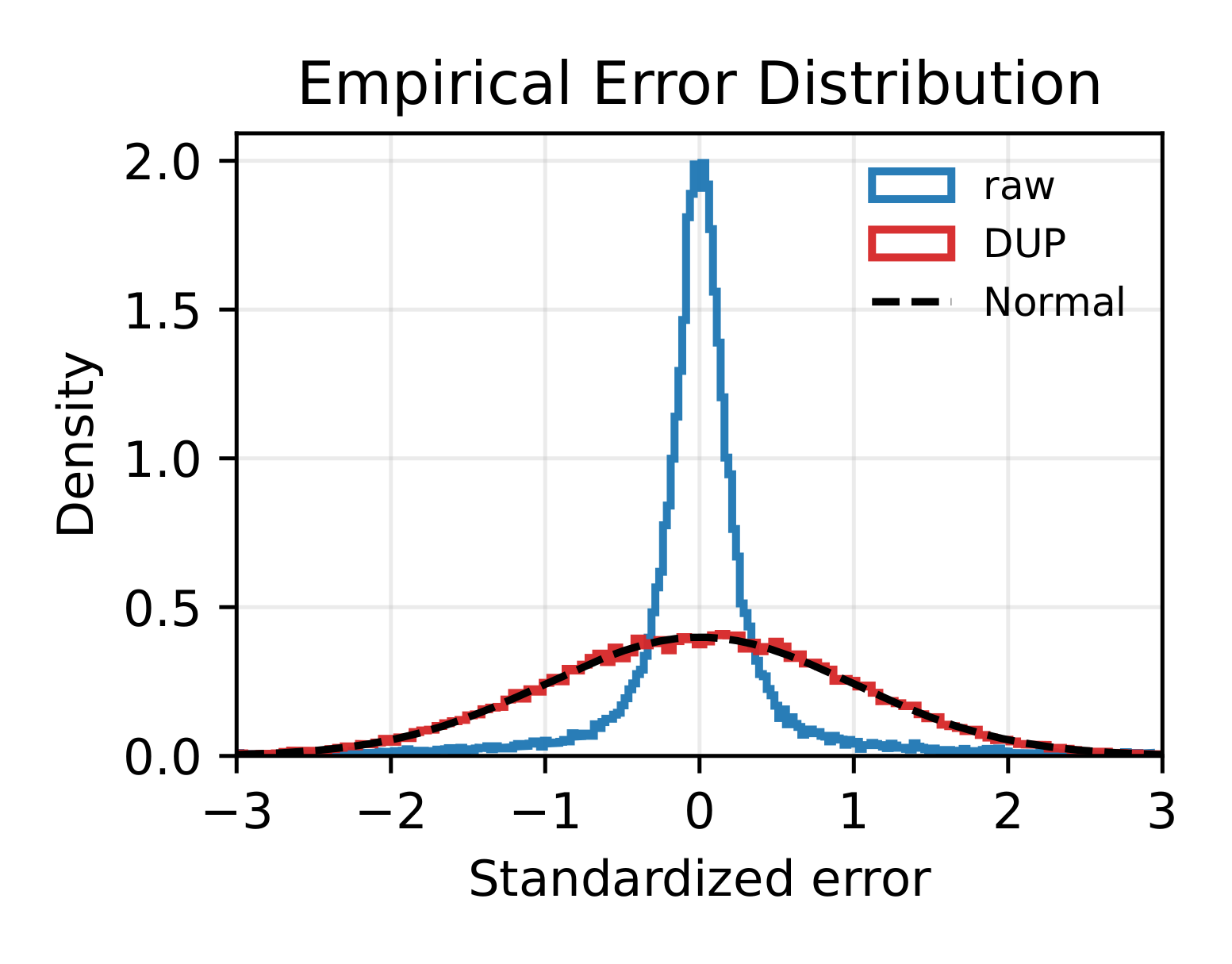

The waveform then targets three effects. Spreading averages diagonal error power and promotes Gaussian-like marginals. Phase dithering weakens coherent off-diagonal structure before mixing. Permutation disperses structured correlation away from decoder-sensitive local neighborhoods. The resulting design requires no retraining and no transmitter-side CSI.

Key Results

- Practical OFDM errors decompose into colored equalized noise and symbol-dependent residual equalization terms.

- Semantic distortion depends on alignment between deployment error covariance and decoder vulnerability, not only on average ESNR.

- Spreading makes the measured decoder-input error marginal substantially more Gaussian-like.

- Permutation becomes especially useful under MMSE equalization, where symbol-dependent residual structure matters more.

- Simulation and USRP measurements both show that waveform processing recovers quality without changing the semantic model.

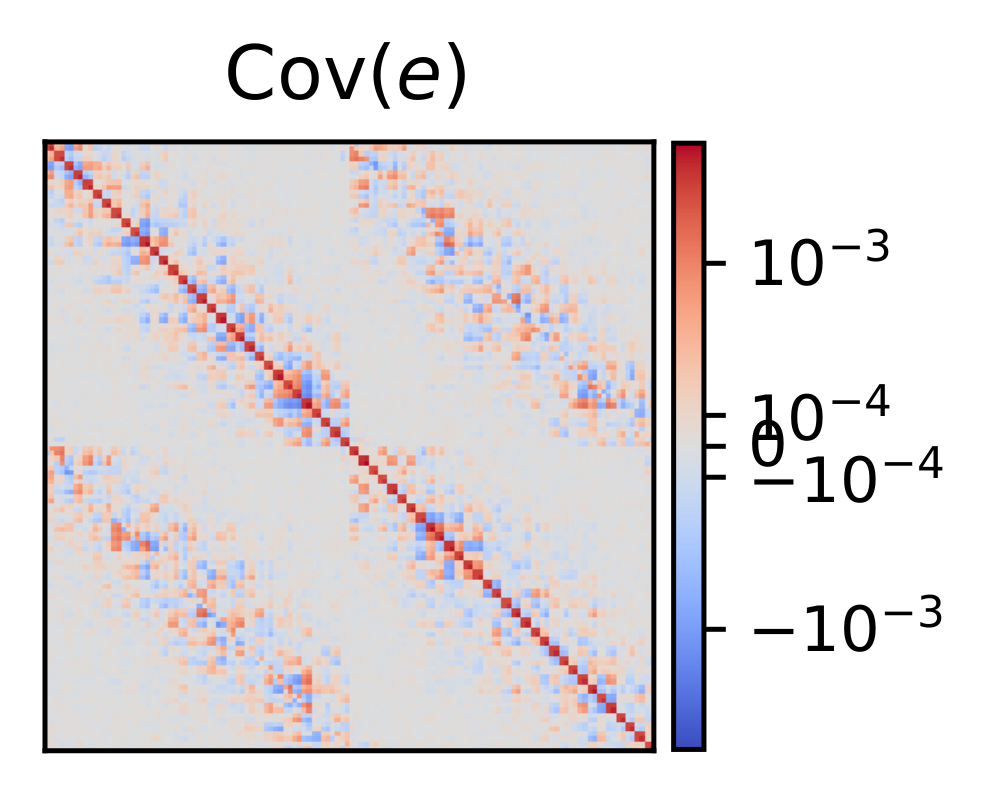

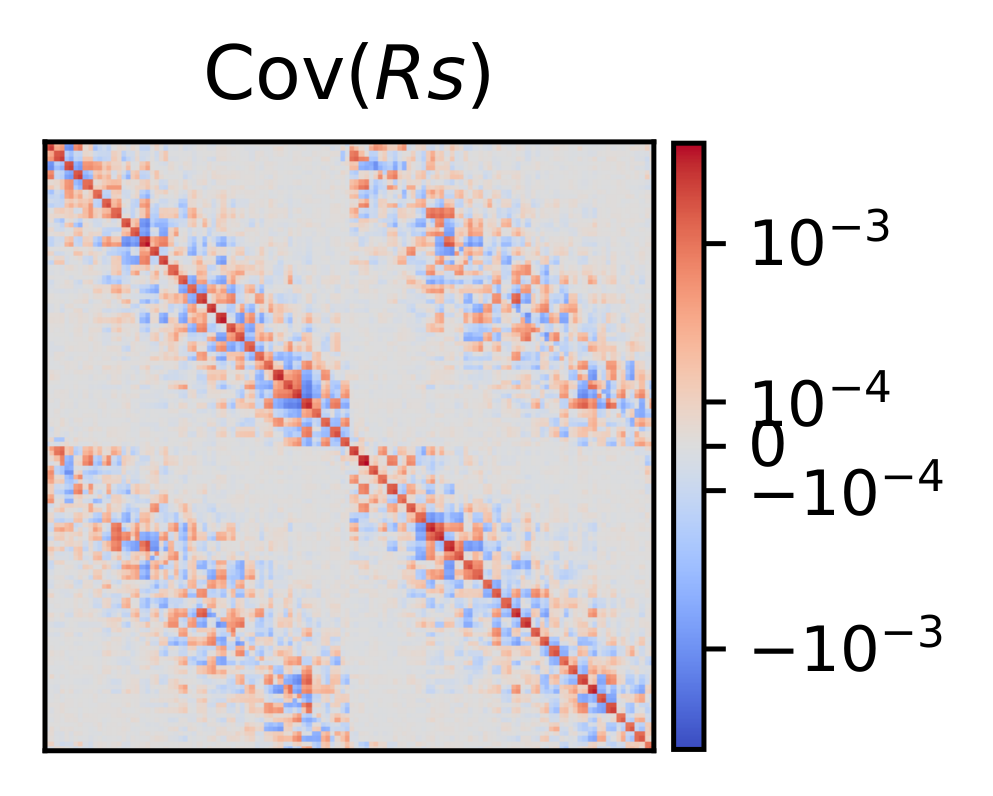

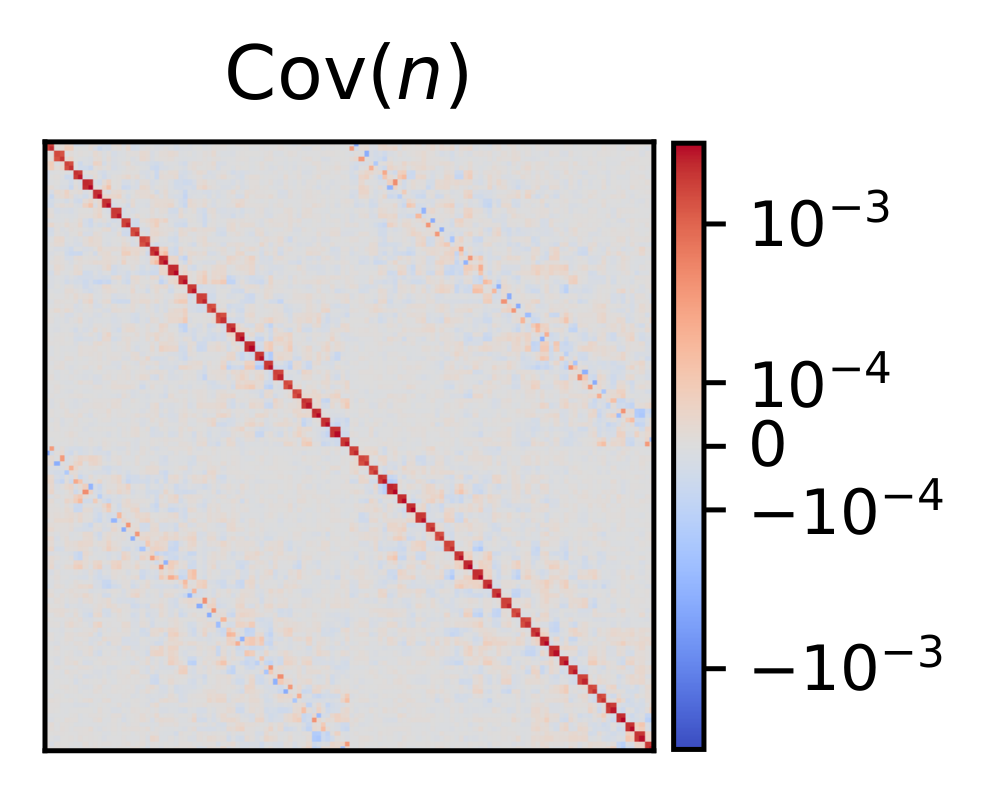

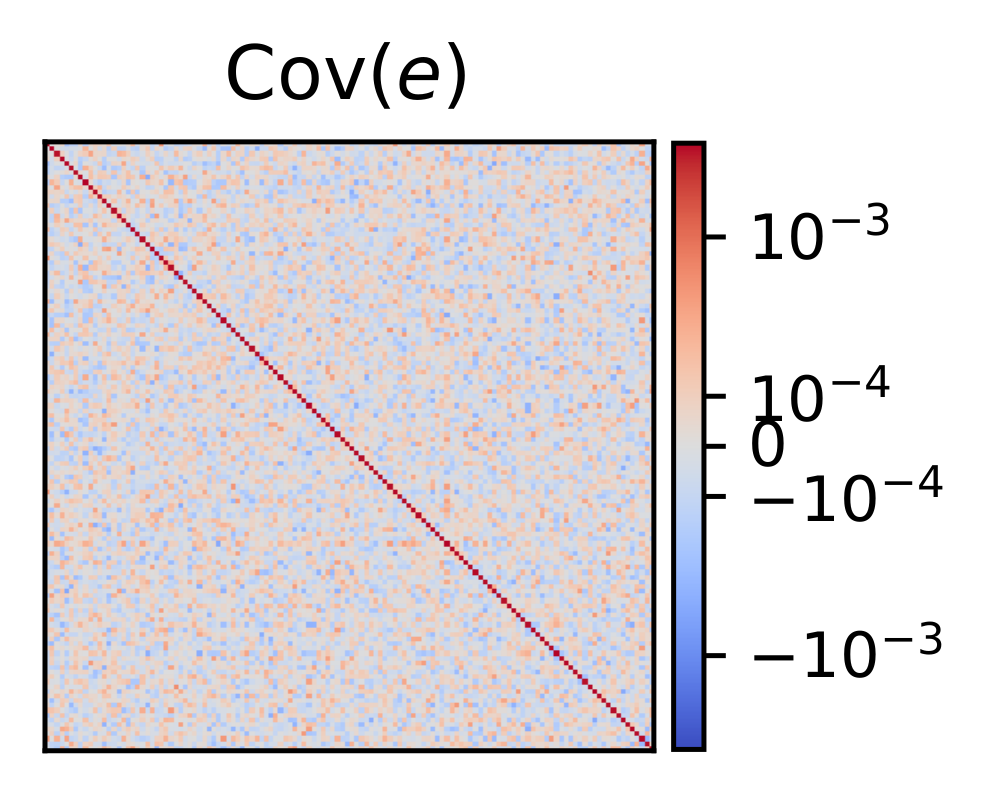

Error Covariance Reshaping

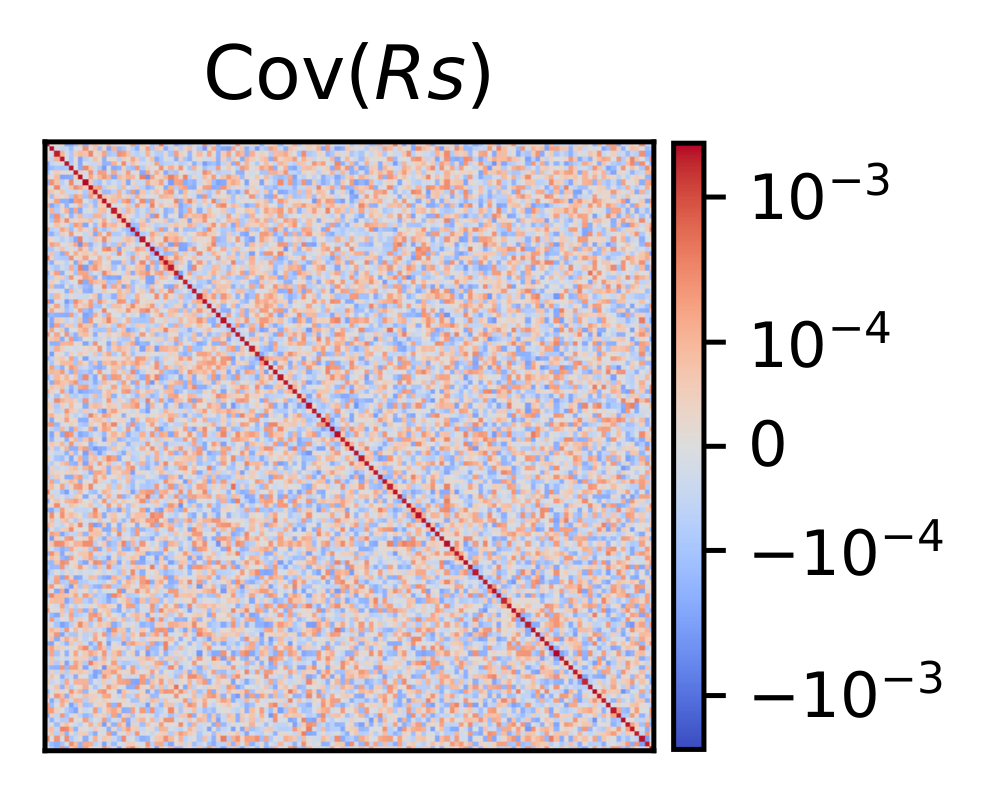

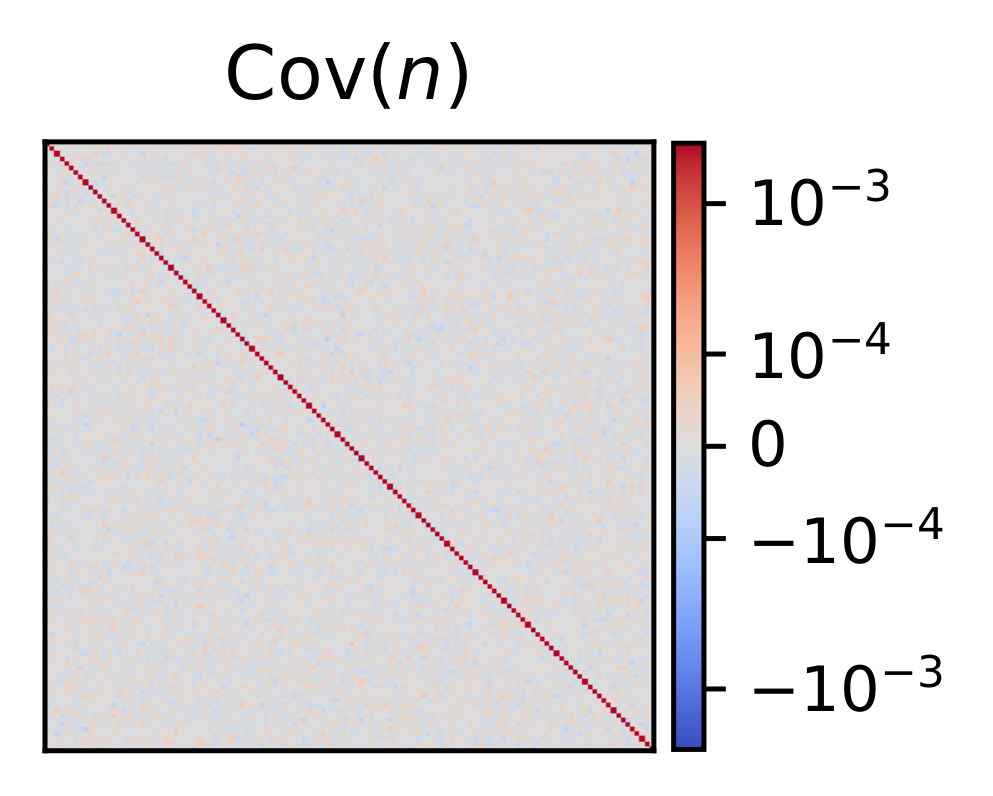

The covariance maps show what the waveform is doing beyond improving a scalar PSNR curve. In raw practical OFDM, the decoder-input error has structured covariance: the total error mixes a symbol-dependent residual term and a colored equalized-noise term. After the DUP waveform and inverse permutation, diagonal power is smoother and structured off-diagonal components are dispersed, reducing harmful alignment with the decoder vulnerability structure.